What’s the point in spending tens of thousands on security infrastructure, getting it all configured properly, running penetration tests which come back clean only to have, three months later, someone in accounts clicking on a fake invoice and compromising the entire network.

I’ve been thinking about why this keeps happening, and I don’t think it’s actually a technology problem.

Technical security controls fail when organisational culture treats security as an IT problem rather than a business responsibility shared across all employees. The technology works – as long as people do what they need to.

The UK government’s latest data shows phishing attacks affected 85% of businesses that experienced security incidents. But only 19% of UK businesses conduct regular staff training on cyber security.

People Keep Clicking

We used to think the problem was that people weren’t paying attention. That if we just made the training more engaging or the warnings more visible, they’d stop falling for social engineering. We were wrong.

Most security training still focuses on spotting spelling mistakes in emails and avoiding suspicious links. That may have worked in 2016 but in 2026, AI-generated phishing emails have perfect grammar, reference real projects you’re working on scraped from LinkedIn, and come from compromised legitimate accounts. The old tells don’t work anymore.

Security training has to be more than compliance exercise teaching people to spot threats that barely exist anymore, more than an annual module about not clicking suspicious links. Meanwhile, attackers are using AI to generate personalized spear-phishing campaigns at scale, creating deepfake audio for business email compromise, and exploiting supply chain vulnerabilities that your team don’t even know exist.

Your team don’t resist security because they’re careless. They resist because training is abstract, outdated, and disconnected from the actual threat landscape.

Controls without context feel like obstacles, not protection.

Security policies get written by technical teams who understand zero trust architecture but don’t understand how people work. A restaurant manager closing at midnight after a 14-hour shift will approve a payment request on their phone if it looks legitimate – even if it bypasses your carefully designed approval workflows. Your manager had no way to know that the supplier’s email was compromised weeks ago.

Security policies that require cognitive effort during high-stress moments risk getting bypassed. We can call that user error or we can recognize that it’s a feature of the system rather than a glitch.

Verizon’s 2025 Data Breach Investigations Report found that 60% of breaches involve a human element - errors, social engineering, or credential misuse. That percentage hasn’t improved despite AI-powered security tools, passwordless authentication, and zero trust implementations.

The defensive technology has advanced but the offensive technology has advanced faster. And humans are still the gap.

We’ve tried changing behaviour through policy documents and annual training modules teaching outdated threat recognition. We haven’t tried helping people understand that AI has fundamentally changed the threat landscape, that the old rules about “suspicious emails have spelling mistakes” don’t apply anymore, and that verification processes exist because deepfakes are now indistinguishable from reality.

Manufacturing learned in the 1980s that you can’t make workplaces safe just by posting safety rules and expecting compliance. The companies that reduced workplace injuries built safety into the workflow itself. We have to stop trying to bolt 2026 security controls onto workflows designed for 2016 threats.

The Thing Nobody Says Out Loud About Cyber Security

Cyber Security friction slows workflows. Humans are naturally lazy and will, by default, optimize for ease of execution. If you won’t acknowledge this tension, you can’t address it.

A salesperson trying to close a deal before month-end may share a confidential proposal via personal Gmail if the approved file transfer system takes six clicks and requires IT approval. A startup founder racing toward a funding deadline will reuse passwords across platforms because remembering unique credentials isn’t the best use of her cognitive energy.

Take, for example, a boutique hotel group with four properties which installed sophisticated access controls requiring multi-factor authentication (MFA) for everything. Excellent security in theory.

But if authentication adds time over busy periods, before long staff will develop workarounds through shared credentials, sessions left open on unattended terminals, and even using the general manager’s login when guests are waiting because hers doesn’t need the second authentication step (for some reason nobody understands).

Designing cyber security that aligns with workflow rather than disrupting it means asking what your team actually do all day. Where are the friction points? How do we create protection that feels invisible?

Businesses involving front-line staff in policy design before implementation get policies that actually get followed because they make operational sense.

Cyber Security policies feel like expressions of institutional distrust. Tell someone their email will be monitored, web browsing logged, file transfers restricted. The implicit message is “we don’t trust you.” People view security as adversarial. They look for ways around restrictions because the restrictions feel personal.

While there are legitimate reasons to monitor certain activities, and genuine insider threats exist, if your security model assumes every team member is potentially malicious, what kind of culture and SOP are you creating? And what does that cost you in terms of good people who resent being treated like threats?

How To Get It Right

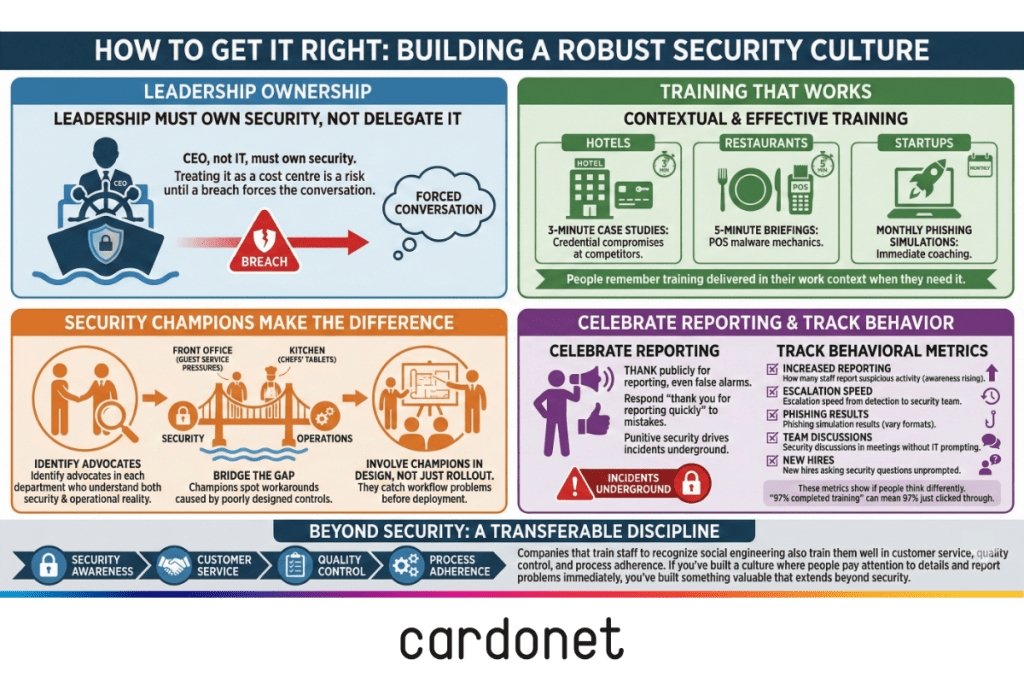

Leadership must own cyber security, not delegate it to IT. If your CEO treats security as a cost centre, you’re stuck until a breach forces the conversation.

Training that works:

- Hotels: 3-minute case studies about credential compromises at competitors

- Restaurants: 5-minute briefings on POS malware mechanics

- Startups: monthly phishing simulations with immediate coaching

People remember training delivered in their work context when they need it.

Security champions make the difference. Identify advocates in each department who understand both security and operational reality. Front office champions know guest service pressures. Kitchen champions understand how chefs use tablets. They spot workarounds caused by poorly designed controls.

Involve champions in security design, not just rollout. They catch workflow problems before deployment.

Celebrate reporting. When someone reports suspicious activity – even false alarms – thank them publicly. When someone admits clicking a phishing link, respond “thank you for reporting quickly,” not with discipline.

Punitive security drives incidents underground. Your team hide breaches for days fearing blame.

You can also track behavioral metrics:

- How many staff report suspicious activity (increasing = awareness rising, not threats increasing)

- Escalation speed from detection to security team

- Phishing simulation results (vary formats or people learn your tests)

- Security discussions in team meetings without IT prompting

- New hires asking security questions unprompted

These show whether people think differently.

Remember that “97% completed training” can mean that 97% clicked through to stop notifications. Nothing more.

Companies that train their team to recognize social engineering also train them well in customer service, quality control, and process adherence. The discipline is transferable. If you’ve built a culture where people pay attention to details, question things that seem wrong, and report problems immediately, you’ve built something valuable that extends beyond security.

What To Do Next

UK businesses experienced approximately 8.58 million cyber crimes in the past year. If you’re reading this because your organisation experienced a security incident involving human error, you’re not alone.

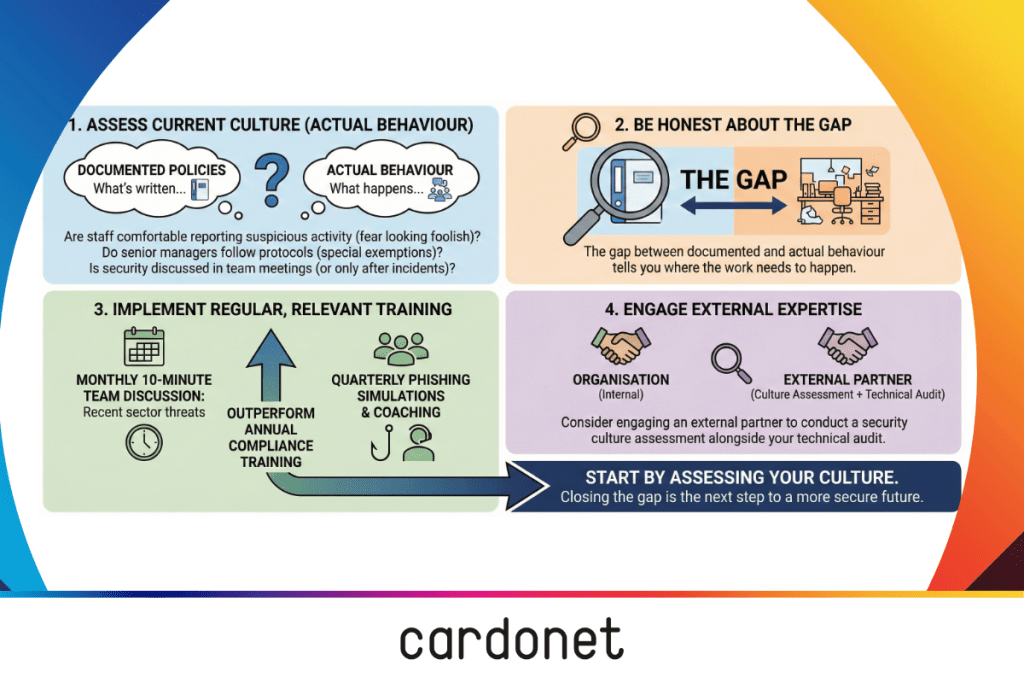

Start by assessing your current security culture – not your documented policies, but how people actually behave. Are your team comfortable reporting suspicious activity, or do they fear looking foolish? Do senior managers follow the same protocols they expect from everyone else, or are there special exemptions for executives? Is security discussed in team meetings, or only after incidents?

Be honest about what you find. The gap between documented and actual behaviour tells you where the work needs to happen.

Implement regular training that connects to people’s actual work. A monthly 10-minute team discussion about recent threats in your sector, combined with quarterly phishing simulations and immediate coaching, will outperform annual compliance training by orders of magnitude.

Consider engaging an external partner to conduct a security culture assessment alongside your technical audit. Discover more about our suggested Cyber Security Journey here.

At Cardonet, we help organisations understand not just where technical controls have gaps, but where culture creates vulnerability. Sometimes an external voice helps surface things people already know but haven’t felt able to discuss internally.

FAQs: Security Culture in 2026

How much should we budget for security awareness training that covers modern threats?

This depends – speak to us for a quotation. The real cost is management time: 30 minutes monthly for team discussions about emerging threats, immediate response to reported incidents, visible leadership engagement. Organizations treating this as annual compliance get no results. Those treating it as ongoing adaptation to evolving threats see behavioral change. Measure prevented incidents, not completion rates.

What if employees resist modern security requirements because they’re disruptive?

You haven’t explained why 2026 security is necessary. Modern security – zero trust, continuous verification, conditional access – is more disruptive than perimeter-based 2016 security. Resistance means you’ve implemented controls without explaining what’s changed about threats. Involve operational teams in understanding modern attack vectors and designing implementations that maintain protection while minimizing workflow disruption. Security designed with people who understand both threats and operations doesn’t get bypassed.

Should we punish employees who fall for AI-generated social engineering?

No. Sophisticated AI-generated attacks fool security-aware people regularly. Punishment drives incidents underground. The goal is immediate reporting of suspected deepfakes, AI-generated fraud, supply chain anomalies – even when people aren’t certain. The moment someone fears discipline for falling for sophisticated social engineering, they’ll hide it, wasting crucial response time. Thank people for reporting promptly regardless of outcome. Even experts get fooled by 2026 threats.

How do we measure whether security culture is keeping pace with evolving threats?

- Track behavior against current threats, not static training completion.

- Monitor:

- staff reporting suspected AI communications, deepfakes, supply chain anomalies (increasing reports = rising awareness, not more threats);

- whether people verify unusual requests through alternate channels unprompted (stops most AI fraud);

- security discussions referencing recent threats in team meetings; new hires asking about modern threats without prompting.

These show whether awareness evolves with threats. Completion metrics for static training modules are meaningless.

We’re a small business – can we afford security culture that addresses 2026 threats?

You can’t afford not to. Small businesses face the same sophisticated threats as big businesses – AI phishing, ransomware, supply chain attacks – but have less technology to compensate. You can’t match enterprise security budgets, but you can echo it culturally with appropriate tools. You need monthly discussions about sector-specific threats, visible leadership engagement, and simple reporting processes alongside cloud-native security tools at appropriate scale.

You must be logged in to post a comment.